Pritchard v Van Nes -- picture it – British Columbia…..<dissolve scene>

Mr. Pritchard and his family moved in next door to Ms. Van Nes and her family in 2008. The trouble started in 2011, when the Van Nes family installed a two-level, 25-foot long, and 2-waterfall “fish pond” along their rear property line. The (constant) noise of the water disturbed and distressed the Pritchards, who started out (as one would) by speaking to Ms. Van Nes about their concerns.

Alas, rather than getting better, the situation kept getting worse

the noise of the fish pond was sometimes drowned out by late-night parties thrown by the Van Nes family;

when the Pritchard’s complained about the noise, the next party included a loud explosion that Ms. Van Nes claimed was dynamite;

the lack of fence between the yards meant that the Van Nes children entered the Pritchard yard;

the lack of fence also allowed the Van Nes’ dog to roam (and soil) the Pritchard yard, as evidenced by more than 20 complaints to the municipality; and

parking (or allowing their guests to park) so as to block the Pritchard’s access to their own driveway. When the Pritchards reported these obstructions to police, it only exacerbated tensions between the parties.

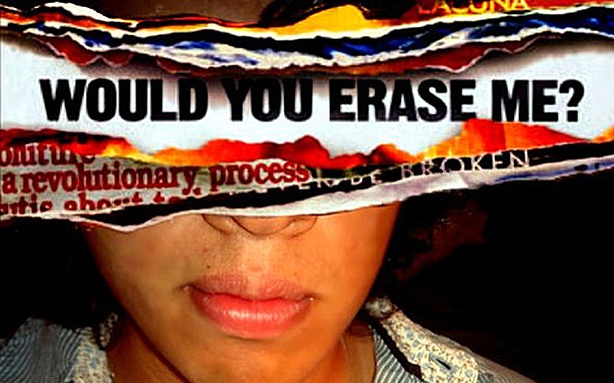

On June 9, 2014 tensions came to a head. Ms. Van Nes published a Facebook post that included photographs of the Pritchard backyard:

Some of you who know me well know I’ve had a neighbour videotaping me and my family in the backyard over the summers.... Under the guise of keeping record of our dog...

Now that we have friends living with us with their 4 kids including young daughters we think it’s borderline obsessive and not normal adult behavior...

Not to mention a red flag because Doug works for the Abbotsford school district on top of it all!!!!

The mirrors are a minor thing... It was the videotaping as well as his request to the city of Abbotsford to force us to move our play centre out of the covenanted forest area and closer to his property line that really, really made me feel as though this man may have a more serious problem.

The post prompted 57 follow-ups – 48 of them from Facebook friends, and 9 by Ms. Van Nes herself.

The narrative (and its attendant allegations) developed from hints to insinuations to flat out statements that Mr. Pritchard was variously a “pedophile”, “creeper”, “nutter”, “freak”, “scumbag”, “peeper” and/or “douchebag”.

Not content to keep this speculation on the Facebook page, a friend of Ms. Van Nes actually shared the post on his own Facebook page and encouraged others to do the same, and further suggested that Ms. Van Nes contact the principal of the school where Mr. Pritchard taught and “use his position as a teacher against him. I would also send it to the newspaper. Shame is a powerful tool.”

The following day, that same friend emailed the school principal, attaching the images from Ms. Van Nes’ page, some (one-sided) details of the situation and the warning that “I think you have a very small window of opportunity before someone begins to publicly declare that your school has a potential pedophile as a staff member. They are not going to care about his reasons – they care that kids may be in danger.”

That same day, another community member (Ms. Regnier whose children had been taught by Mr. Pritchard and who believed him to be an excellent teacher and valuable resource for the school and community) became aware of Ms. Van Nes’ accusations and went to the school to inform Mr. Pritchard that accusations that he was a pedophile had surfaced on Facebook. After talking with Mr. Pritchard, she accompanied him to the office to speak with the Principal (Mr. Horton), who had already received the email warning about Mr. Pritchard. Mr. Horton contacted his superior, who, Mr. Horton testified, seemed shocked, asking Mr. Horton whether he believed the allegations; Mr. Horton said he did not, although he testified that he was concerned as the allegations reflected poorly on him and the school. He testified that if the allegations were substantiated, Mr. Pritchard would have had his teaching license revoked.

Tracking the allegations back to Ms. Van Nes’ Facebook page, Mr. Pritchard’s wife printed out the posts and Ms. Van Nes’ friends list. They took this material with them to the police station to file a complaint. Later that evening a police officer arrived at the Pritchard home to collect more details – when the Pritchards attempted to show him the content on Facebook they found that it was no longer accessible.

Altogether, the post was visible on Ms. Van Nes’ Facebook page for approximately 27 ½ hours. Its deletion, however, did not remove copies that had been placed on other Facebook pages or shared with others, nor could it prevent the spread of information.

The effects have been many:

There was at least one child of one of Ms. Van Nes’ “friends” who commented on the posts, who was removed from his music programs. The next time he organized a band trip out of town and sought parent volunteers to be chaperones, he was overwhelmed with offers; that had never previously been the case. He feels that he has lost the trust of parents and students. He dreads public performances with the school music groups. Mr. Pritchard finds he is now constantly guarded in his interactions with students; for example, whereas before he would adjust a student’s fingers on an instrument, he now avoids any physical contact to shield himself from allegations of impropriety. He has cut back on his participation in extra-curricular activities. He has lost his love of teaching; he no longer finds it fun, and he wishes he had the means to get out of the profession. He considered responding to a private school’s advertisement for a summer employment position but did not because of a concern that the posts were still “out there”. Knowing that at least one prominent member of the community saw the posts and commented on them, he feels awkward, humiliated and stressed when out in public, wondering who might know about the Facebook posts and whether they believe the lies that were told about him.

Mr. Pritchard also testified as to how frightened he was that some of the posts suggested he should be confronted or threatened. Mr. Pritchard and his wife both testified that a short time after the posts, their doorbell was rung late at night, and their car was “keyed” in their driveway, an 80 cm scratch that cost approximately $2,000 to repair. His wife also testified to finding large rocks on their driveway and their front lawn.

They also both testified that their two sons, both of whom attended the school where their father teaches, are aware of the Facebook posts, and have appeared to be upset and worried as to the consequences.

Mr. Pritchard testified that he thinks it is unlikely that he could now get a job in another school district. He acknowledged that in fact he has no idea how far and wide the posts actually spread, but he spoke with conviction as to this belief, and I find the fact that he holds this belief to be an illustration of the terrible psychological impact this incident has had.

Who Is Liable and For What?

It’s a horrible tale, and nobody wins. But what does the court have to say about it?

The claim for nuisance – that is, interference with Mr. Pritchard’s use and enjoyment of his land – is pretty clear. Both the noise from the waterfall and the two years of the Van Nes’ dog defecating on their yard were clear interferences. A permanent injunction that the waterfall not be operated between 10pm and 7am was issued. The judge also awards $2000 for the waterfall noise, and a further $500 for the dog feces.

The real issue here is, of course, the claim for defamation.

Is Ms. Van Nes responsible for her own defamatory remarks? Yes she is. The remarks and their innuendo were defamatory, and were published to at least the persons who responded, likely to all 2059 of her friends, and (given Ms. Van Nes’ failure to use any privacy settings) viewable to any and all Facebook users.

Is Ms. Van Nes liable for the republication of her defamatory remarks by others? Republication, in this case, happened both on Facebook and via the letter to the school principal. Yes she is, because she authorized those republications. Looking at all the circumstances here, especially her frequent and ongoing engagement with the comment thread, the judge finds that Ms. Van Nes had constructive knowledge of Mr. Parks’ comments, soon after they were made.

Her silence, in the face of Mr. Parks’ statement, “why don’t we let the world know”, therefore effectively served as authorization for any and all republication by him, not limited to republication through Facebook. Any person in the position of Mr. Parks would have reasonably assumed such authorization to have been given. I find that the defendant’s failure to take positive steps to warn Mr. Parks not to take measures on his own, following his admonition to “let the world know”, leads to her being deemed to have been a publisher of Mr. Parks’ email to Mr. Pritchard’s principal, Mr. Horton.

Is Ms. Van Nes liable for defamatory third-party Facebook comments? Again, the answer is yes. The judge sets out the test for such liability as: (1) actual knowledge of the defamatory material posted by the third party; (2) a deliberate act or deliberate inaction; and (3) power and control over the defamatory content. If these three factors can be established, it can be said that the defendant has adopted the third party defamatory material as their own.

In the circumstances of the present case, the foregoing analysis leads to the conclusion that Ms. Van Nes was responsible for the defamatory comments of her “friends”. When the posts were printed off, on the afternoon of June 10th, her various replies were indicated as having been made 21 hours, 16 hours, 15 hours, 4 hours, and 3 hours previously. As I stated above, it is apparent, given the nine reply posts she made to her “friends”’ comments over that time period, that Ms. Van Nes had her Facebook page under, if not continuous, then at least constant viewing. I did not have evidence on the ability of a Facebook user to delete individual posts made on a user’s page; if the version of Facebook then in use did not provide users with that ability, then Ms. Van Nes had an obligation to delete her initial posts, and the comments, in their entirety, as soon as those “friends” began posting defamatory comments of their own. I find as a matter of fact that Ms. Van Nes acquired knowledge of the defamatory comments of her “friends”, if not as they were being made, then at least very shortly thereafter. She had control of her Facebook page. She failed to act by way of deleting those comments, or deleting the posts as a whole, within a reasonable time – a “reasonable time”, given the gravity of the defamatory remarks and the ease with which deletion could be accomplished, being immediately. She is liable to the plaintiff on that basis.

Having established all three potential forms of liability for defamation, Mr. Pritchard is awarded $50,000 in general damages and an additional $15,000 in punitive damages.

But I Was Just Venting…

A final thought from the judgement – one that takes into account the medium and the dynamic of Facebook.

I would find that the nature of the medium, and the content of Ms. Van Nes’ initial posts, created a reasonable expectation of further defamatory statements being made. Even if it were the case that all she had meant to do was “vent”, I would find that she had a positive obligation to actively monitor and control posted comments. Her failure to do so allowed what may have only started off as thoughtless “venting” to snowball, and to become perceived as a call to action – offers of participation in confrontations and interventions, and recommendations of active steps being taken to shame the plaintiff publically – with devastating consequences. This fact pattern, in my view, is distinguishable from situations involving purely passive providers. The defendant ought to share in responsibility for the defamatory comments posted by third parties, from the time those comments were made, regardless of whether or when she actually became aware of them.

So go ahead. Vent all you want. But your responsibility may extend further than you think…proceed with caution.